Model selection workflow#

This page demonstrates how the sklearn-style meta-estimators in xyz can be

used to search embedding settings and interaction delays before running a final

TE analysis.

Why this workflow exists#

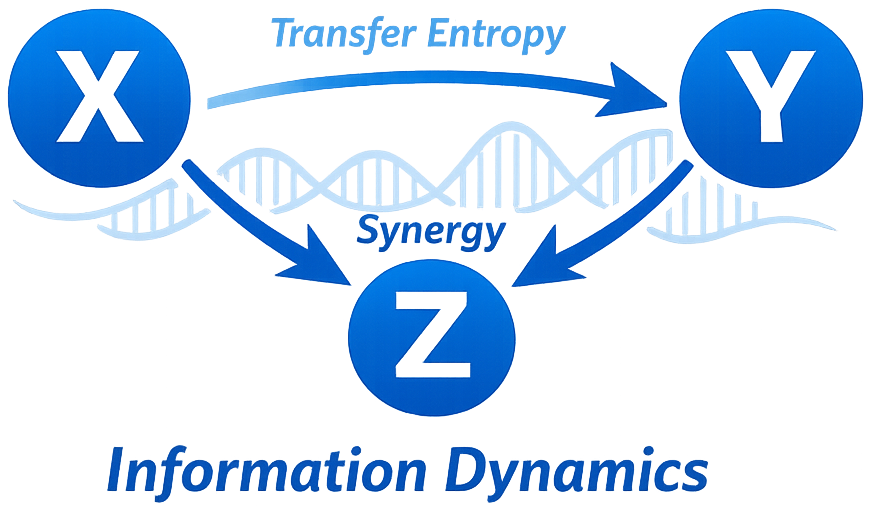

TRENTOOL-style TE analysis is not only about the low-level estimator. It also depends on:

choosing a sensible embedding dimension,

choosing an embedding spacing,

and choosing a plausible interaction delay.

The xyz search classes make these choices explicit and reproducible in a

Pythonic, scikit-learn-like form.

Example: embedding and delay search#

import numpy as np

from xyz import (

GaussianTransferEntropy,

InteractionDelaySearchCV,

RagwitzEmbeddingSearchCV,

)

rng = np.random.default_rng(123)

n = 700

driver = rng.normal(size=n)

target = np.zeros(n)

for t in range(2, n):

target[t] = 0.45 * target[t - 1] + 0.20 * target[t - 2] + 0.35 * driver[t - 2] + 0.1 * rng.normal()

data = np.column_stack([target, driver])

base = GaussianTransferEntropy(driver_indices=[1], target_indices=[0], lags=1)

embedding = RagwitzEmbeddingSearchCV(

base,

target_index=0,

dimensions=(1, 2, 3),

taus=(1, 2, 3),

).fit(data)

delay = InteractionDelaySearchCV(

base.set_params(**embedding.best_params_),

delays=(1, 2, 3, 4, 5),

).fit(data)

print(embedding.best_params_, embedding.best_score_)

print(delay.best_delay_, delay.best_score_)

Interactive example#

The two figures below show:

a heatmap of the Ragwitz-style embedding search surface,

a delay profile after fixing the best embedding.

Interpretation#

A smooth embedding surface is usually easier to trust than a highly erratic one.

Delay reconstruction is most convincing when the TE profile has a clear and interpretable maximum.

In real data, do not rely on model selection alone; combine it with significance testing and domain knowledge.