Theory and notation#

This page defines the notation used throughout xyz and summarizes the

mathematical quantities estimated by the library.

Why this matters#

The same high-level quantity, such as transfer entropy, can be estimated under very different assumptions:

a linear-Gaussian model,

a nonparametric nearest-neighbor model,

a fixed-radius kernel approximation,

or a discrete/binned empirical distribution.

The estimators in xyz are therefore best understood as different numerical

approximations to the same information-theoretic functionals.

Notation#

Let \(Y_t\) be the target process at time \(t\). Its embedded past is written as

where \(d\) is the embedding dimension and \(\tau\) is the embedding spacing. Likewise:

\(X_t^-\) denotes the embedded past of a driver process,

\(Z_t^-\) denotes the embedded past of one or more conditioning processes,

\(u\) denotes an interaction delay between source and target when a delay-specific TE estimator is used.

In xyz, these state vectors are assembled by the delay-embedding helpers in

xyz.preprocessing.

Units#

Unless otherwise stated, values are reported in nats:

Core information quantities#

Entropy#

For a continuous random vector \(Y \in \mathbb{R}^d\),

If \(Y\) is Gaussian with covariance matrix \(\Sigma\),

This is the quantity estimated by xyz.MVNEntropy.

Conditional entropy#

For two random variables \(X\) and \(Y\),

In a regression-based Gaussian setting, this can be expressed via the covariance of the residual process:

where \(\varepsilon\) are the residuals from regressing \(Y\) on \(X\).

Mutual information#

Mutual information measures statistical dependence:

It is symmetric in \(X\) and \(Y\) and nonnegative in the population. In finite samples, nonparametric estimators can produce small negative values because of estimation variance.

Conditional mutual information#

Conditional mutual information measures dependence that remains after adjusting for a third variable:

This is the core building block of transfer entropy.

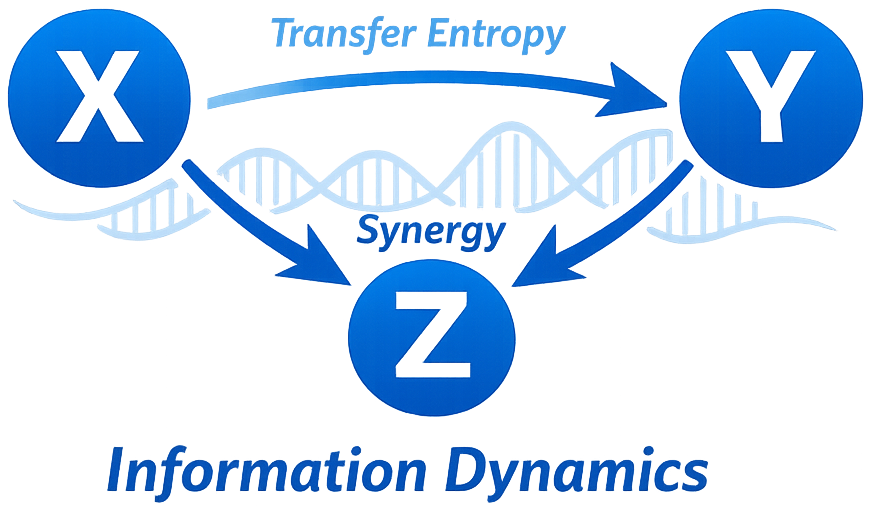

Transfer entropy#

Bivariate transfer entropy from \(X\) to \(Y\) quantifies predictive information flow from the past of \(X\) to the present of \(Y\) beyond the information already contained in the past of \(Y\):

If a separate interaction delay \(u\) is used, the source state can be written more explicitly as

This distinction is important in TRENTOOL-style delay reconstruction.

Partial transfer entropy#

Partial transfer entropy adjusts for additional confounding processes \(Z_t^-\):

This is the natural choice when the apparent effect of \(X\) on \(Y\) could be mediated or confounded by known controls.

Self-entropy / information storage#

xyz uses the term self-entropy for information storage:

This quantifies how much of the present of a process is predictable from its own past.

Estimator families in xyz#

Gaussian / linear#

These estimators assume the relevant distributions are well approximated by linear regressions with Gaussian residuals. They are fast, interpretable, and provide analytical F-tests for TE, PTE, and self-entropy.

KSG / nearest-neighbor#

These estimators are nonparametric and approximate entropies from nearest-neighbor distances. They are more flexible than Gaussian estimators, especially for nonlinear dependence, but require more data and more careful parameter tuning.

Kernel / fixed-radius#

These estimators replace the fixed-\(k\) neighborhood of KSG with a fixed radius \(r\). They are intuitive and useful for sensitivity analysis, but their performance can change substantially with the chosen radius.

Discrete / binning#

These estimators quantize the data and estimate probabilities from empirical frequencies. They are especially appropriate for symbolic or truly discrete state spaces, but can become sparse in high-dimensional embeddings.

How to choose an estimator family#

Family |

Best when |

Main strengths |

Main risks |

|---|---|---|---|

Gaussian |

Dynamics are approximately linear and homoscedastic |

Fast, stable, interpretable, analytical significance |

Misses nonlinear structure |

KSG |

Nonlinear dependence is plausible and sample size is adequate |

Flexible, widely used, closest to TRENTOOL-style continuous TE |

Higher variance, more tuning, more expensive |

Kernel |

A local geometric neighborhood view is desirable |

Simple radius interpretation, useful for robustness sweeps |

Highly sensitive to |

Discrete |

Data are symbolic, categorical, or deliberately quantized |

Conceptually simple, easy to interpret |

Binning bias and state-space sparsity |

ITS / TSTOOL / TRENTOOL alignment#

The continuous nearest-neighbor estimators in xyz follow the same broad

strategy as ITS/TSTOOL/TRENTOOL:

find a neighborhood in the highest-dimensional joint space,

project that neighborhood into lower-dimensional marginal spaces,

use projected counts to estimate entropy differences with reduced bias.

For the TE/PTE/SE parity tests, xyz excludes self-matches in the projected

count stage, mirroring the ITS range_search(..., past=0) behavior.

The TRENTOOL workflow then layers additional methodology on top of those core estimators: ACT-aware trial selection, Ragwitz embedding search, interaction delay reconstruction, surrogate testing, and group-level harmonization. Those workflow components are the bridge between low-level estimator parity and a full causal-analysis pipeline.