Gaussian and linear estimators#

The Gaussian family is the right starting point when you believe the dependence structure is mostly linear and the innovation process is reasonably close to Gaussian.

Implemented classes#

xyz.MVNEntropyxyz.MVLNEntropyxyz.MVParetoEntropy(placeholder, not implemented)xyz.MVExponentialEntropy(placeholder, not implemented)xyz.MVCondEntropyxyz.MVNMutualInformationxyz.GaussianTransferEntropyxyz.GaussianPartialTransferEntropyxyz.GaussianSelfEntropyxyz.GaussianCopulaMutualInformationxyz.GaussianCopulaConditionalMutualInformationxyz.GaussianCopulaTransferEntropy

What these estimators compute#

Multivariate Gaussian entropy#

For \(Y \sim \mathcal{N}(\mu, \Sigma)\) with dimension \(d\),

This is estimated directly from the sample covariance matrix in

MVNEntropy.

Conditional entropy from linear regression#

If \(Y\) is linearly regressed on \(X\),

then the Gaussian conditional entropy is determined by the residual covariance:

This is the quantity returned by MVCondEntropy.

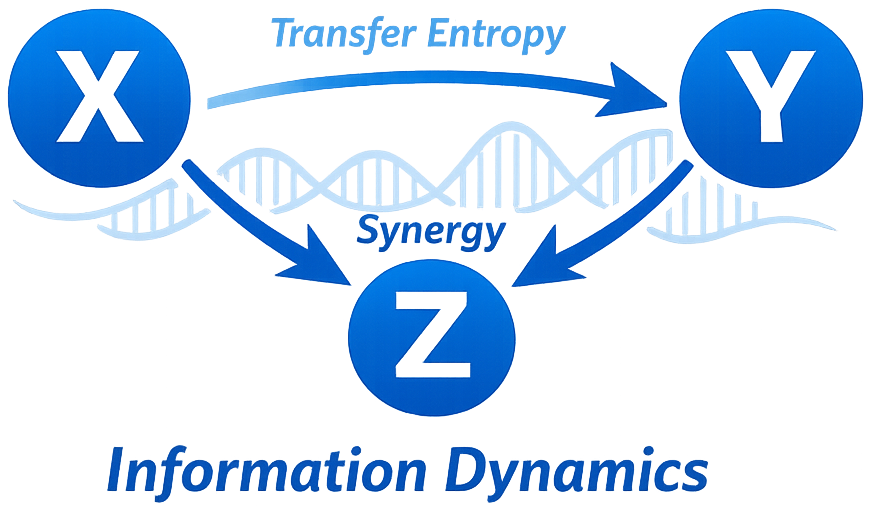

Gaussian transfer entropy#

For a target \(Y_t\), driver \(X_t\), and target past \(Y_t^-\), Gaussian transfer entropy is estimated as

Operationally, this is the difference between the entropy of:

residuals from the restricted regression \(Y_t \sim Y_t^-\), and

residuals from the unrestricted regression \(Y_t \sim Y_t^- + X_t^-\).

Gaussian partial transfer entropy adds controls \(Z_t^-\):

Gaussian self-entropy / information storage#

Information storage is

It quantifies how predictable the present is from the process’s own past.

Gaussian-copula estimators#

GaussianCopulaMutualInformation, GaussianCopulaConditionalMutualInformation, and

GaussianCopulaTransferEntropy apply a rank-based (Gaussian copula) transform to each

marginal, then use the same Gaussian formulas on the transformed data. They match the

plain Gaussian estimators on Gaussian data but remain stable under monotone nonlinear

marginals (e.g. heavy-tailed or skewed), offering a middle ground between full Gaussian

and full k-NN.

Why use the Gaussian family#

It is usually the fastest estimator family in the library.

It has a clear regression interpretation that is familiar to users of VAR and Granger-causality style models.

It works well as a baseline even when you later move to KSG or kernel-based estimators.

It provides a natural analytical significance test through the F-test on restricted vs unrestricted regressions.

When to prefer it#

Use Gaussian estimators when:

your sample size is modest,

you need fast lag or delay scans,

interpretability matters more than flexibility,

or you want a first-pass diagnostic before running more expensive nonparametric estimators.

Typical use cases#

Finance: factor-to-asset lead-lag analysis, market microstructure influence, baseline directional dependence screening.

Neuroscience: approximate linear interactions, especially in preprocessing or quick exploratory pipelines.

Physiology: autoregulatory dynamics where storage and directed influence are both of interest.

How to use them#

import numpy as np

from xyz import (

GaussianPartialTransferEntropy,

GaussianSelfEntropy,

GaussianTransferEntropy,

)

data = np.random.randn(2000, 3)

te = GaussianTransferEntropy(

driver_indices=[0],

target_indices=[1],

lags=2,

tau=1,

delay=1,

).fit(data)

pte = GaussianPartialTransferEntropy(

driver_indices=[0],

target_indices=[1],

conditioning_indices=[2],

lags=2,

).fit(data)

se = GaussianSelfEntropy(target_indices=[1], lags=2).fit(data)

print(te.transfer_entropy_, te.p_value_)

print(pte.transfer_entropy_, pte.p_value_)

print(se.self_entropy_, se.p_value_)

Parameter guidance#

lagscontrols how much of the past is included.taucontrols the spacing between lagged coordinates.delaycontrols the source-to-target interaction lag when you want a TRENTOOL-like delay scan.conditioning_indicesshould include plausible confounders when using partial TE.

Practical advice#

Centering and scaling are sensible defaults for most applications.

If TE changes dramatically with small lag changes, the data may need a more explicit embedding search rather than a hand-picked lag.

Use the Gaussian family as the computational backbone for model-selection sweeps, then confirm important findings with KSG where nonlinear structure is plausible.

Interactive example#

The figure below shows Gaussian TE as a function of the assumed interaction delay in a synthetic linear system. The peak should occur near the true delay.