Discrete (binning) estimators#

The discrete family is intended for symbolic processes or for continuous data that you deliberately quantize into a small number of states.

Implemented classes#

xyz.DiscreteTransferEntropyxyz.DiscretePartialTransferEntropyxyz.DiscreteSelfEntropy

Mathematics#

These estimators compute empirical probabilities from counts of repeated states in embedded observation matrices.

If \(Y_t\) is the current target state and \(Y_t^-\) is its embedded past, then:

Each entropy term is evaluated from empirical frequencies:

Quantization#

When quantize=True, xyz applies MATLAB-compatible uniform quantization

with c bins before counting discrete states. This is useful for ITS-style

parity and for exploratory symbolic analysis, but it also introduces a modeling

choice: the result now depends on the quantization scheme.

Why use discrete estimators#

They are natural for genuinely discrete state spaces.

They are easy to interpret because they reduce everything to frequency tables.

They are often a useful pedagogical baseline for understanding TE and PTE.

When to use them#

Use the discrete family when:

your data are already categorical or symbolic,

you want to compare multiple coarse quantizations of a continuous process,

or you want a transparent state-counting baseline before moving to KSG or Gaussian estimators.

Typical use cases#

Symbolic dynamics and regime switching.

Discretized market states, such as up/flat/down returns.

Binned neural or physiological activity states.

How to use them#

import numpy as np

from xyz import (

DiscretePartialTransferEntropy,

DiscreteSelfEntropy,

DiscreteTransferEntropy,

)

data = np.random.randn(2000, 3)

te = DiscreteTransferEntropy(

driver_indices=[0],

target_indices=[1],

lags=1,

c=8,

quantize=True,

).fit(data)

pte = DiscretePartialTransferEntropy(

driver_indices=[0],

target_indices=[1],

conditioning_indices=[2],

lags=1,

c=8,

).fit(data)

se = DiscreteSelfEntropy(target_indices=[1], lags=2, c=8).fit(data)

print(te.transfer_entropy_)

print(pte.transfer_entropy_)

print(se.self_entropy_)

Practical advice#

ctoo small merges distinct states and may underfit.ctoo large creates sparse tables and unstable estimates.If estimates change dramatically with the number of bins, report that sensitivity rather than hiding it.

In higher-dimensional embeddings, the discrete state space grows quickly, so KSG or Gaussian estimators may become more reliable.

Interactive example#

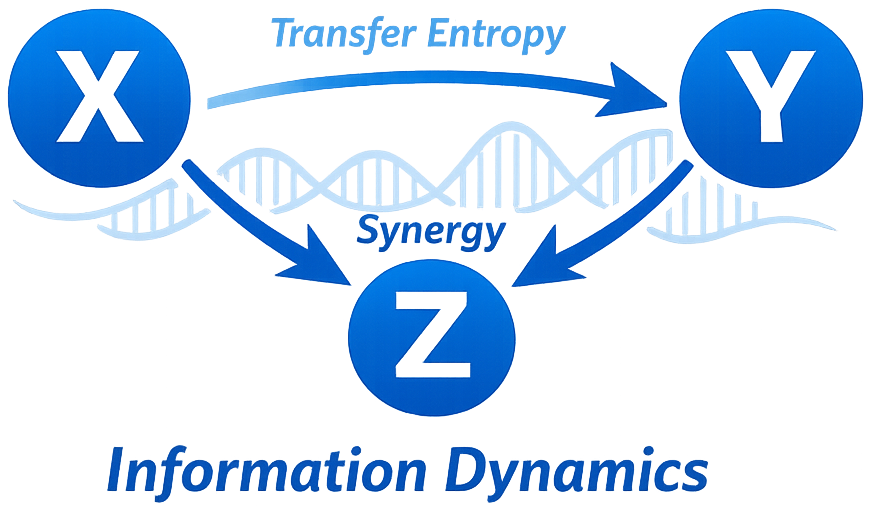

The plot below shows discrete TE as a function of the number of quantization bins in a synthetic lagged system. This is a useful diagnostic because an estimate that is only present for one narrow bin count is often not robust.